Section M is where the solicitation tells you exactly how your proposal will be evaluated. It's not a suggestion. It's the rulebook. And as former government evaluators, we can tell you that most proposal teams treat it like background reading instead of the literal scoring criteria their proposal will be judged against.

Here's what's frustrating from the evaluator's chair: Section M isn't vague. It's explicit. Yet proposal after proposal arrives with the same gaps, the same unfounded claims, and the same missing documentation. The result? A proposal that lands at "Good" and stays there: not because it's bad, but because the written record doesn't justify anything higher.

Let's walk through the ten mistakes we see repeatedly. These aren't subjective. They're the disconnects evaluators notice within the first few pages of review.

1. You're Responding to Section L, Not Section M

Section L tells you what to include. Section M tells you what gets scored. They're not the same thing.

If Section L says "describe your approach" and Section M says "demonstrate material risk reduction through operationalized mitigation strategies," you can't just describe your approach and expect credit. The evaluation criteria demand documentation of risk reduction. If the written record doesn't address that specific element, the evaluator has nothing to document as a strength.

Most proposals comply with Section L and assume that covers Section M. It doesn't.

2. Your Past Performance Narrative Doesn't Match the Evaluation Sub-Factors

Section M often breaks past performance into sub-factors: relevance, recency, quality, and sometimes complexity or dollar value. Your narrative needs to explicitly address each one.

If the RFP scores relevance separately, the evaluator is looking for documented evidence that your prior contracts align with the scope of this solicitation. Saying "we have extensive experience" doesn't create a defensible record. Stating "Contract XYZ involved identical CLIN structures, labor categories, and security requirements under the same NAICS code" does.

If recency is a sub-factor and your examples are from 2019, you need to address that gap directly: or expect it to limit your rating.

3. You're Claiming Benefits Without Quantification

This one shows up constantly. Proposals state things like "our approach will improve efficiency" or "reduce costs" without providing measurable detail.

Here's what the evaluator needs: by how much, over what period, and compared to what baseline?

Efficiency improvements need to be quantified. Cost reductions need to be explained and supported. If you're claiming a benefit, the evaluator must be able to document it as a material advantage. Vague claims don't meet that standard.

4. Risk Mitigation Is Described, Not Operationalized

Section M typically evaluates your ability to identify and mitigate performance risk. But most proposals just describe generic risk mitigation strategies.

The evaluator isn't scoring whether you understand what risk mitigation is. They're scoring whether your documented approach actually reduces risk in a way that can be defended in the evaluation record.

Saying "we will conduct weekly status meetings to mitigate schedule risk" is a description. Explaining how weekly milestone tracking against the performance work statement triggers corrective action protocols before delays occur: and providing the documented process for doing so: is operationalized mitigation.

The difference determines whether the evaluator can justify a strength.

5. Your Staffing Plan Doesn't Address the Actual Evaluation Criteria

If Section M scores "relevant qualifications and experience," the evaluator is looking for documented alignment between your proposed personnel and the specific requirements of the solicitation.

Résumés are supporting documentation. They don't replace narrative justification. If the requirement involves cybersecurity compliance and your proposed lead has never worked on a FedRAMP-authorized system, that gap will be noted: whether you address it or not.

The proposal must explicitly connect personnel qualifications to the evaluated criteria. If it doesn't, the evaluator has no basis to award credit.

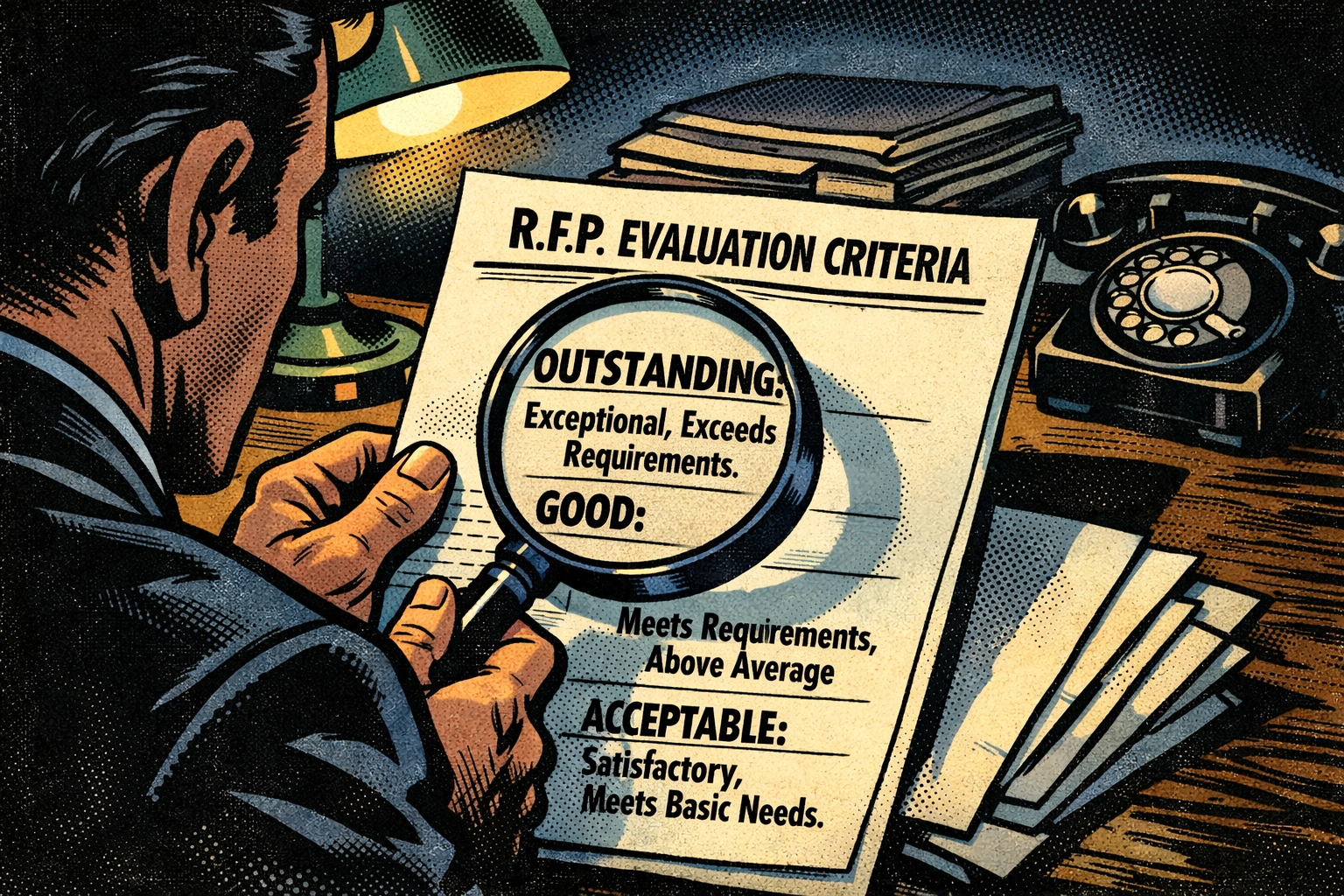

6. You're Treating Adjectival Definitions as Negotiable

Section M defines what "Outstanding," "Good," "Acceptable," and "Marginal" mean for that specific procurement. Those definitions are binding.

If "Outstanding" requires that strengths "materially reduce performance risk," you can't get an Outstanding rating by listing features. The written record must demonstrate material risk reduction in language the evaluator can cite in their documentation.

Most proposals ignore these definitions entirely and write in a generic persuasive style. That's a structural mismatch. The proposal needs to be written in the language of the rating definitions.

7. You're Not Cross-Referencing Proposal Sections to Evaluation Factors

Evaluators work factor by factor. If Section M has five technical evaluation factors, the evaluator scores them independently. They're not re-reading your entire proposal five times looking for relevant content.

If your risk mitigation content is buried in a staffing narrative and the evaluator is scoring the management approach factor, they may not find it. That's not their job. Your job is to ensure the relevant content appears where the evaluator is looking.

Cross-referencing helps, but only if the material is actually present in the evaluated section. Pointing to content that doesn't substantiate the claim doesn't solve the problem.

8. Your Transition Plan Assumes Continuity That Isn't Documented

If you're proposing a transition from an incumbent, Section M likely evaluates your ability to execute that transition without disruption. The evaluator needs to see documented processes, not assumptions.

Statements like "we will ensure seamless continuity" don't create a defensible record. What the evaluator needs: the specific steps you'll take, the timeline, the resources allocated, and how you'll verify continuity during the transition period.

If your transition plan relies on incumbent cooperation or agency support that isn't guaranteed in the solicitation, that's a risk. If you haven't documented how you'll mitigate that risk, the evaluator will note it.

9. You're Using Proprietary Claims as a Substitute for Explanation

Some proposals label entire sections as proprietary and then provide minimal explanation, assuming the label adds value. It doesn't.

Evaluators need to understand what you're proposing and why it matters. If your proprietary tool or methodology is relevant to an evaluation factor, you need to explain: in sufficient detail: how it functions and what measurable benefit it provides.

Saying "our proprietary system improves performance" and marking it proprietary doesn't give the evaluator anything to document as a strength. If you can't explain it clearly enough for evaluation, it can't be scored.

10. You're Not Addressing Evaluation Notes and Instructions

Section M often includes evaluation notes: small paragraphs that clarify how the government will interpret certain criteria. Most proposal teams skip right over them.

If a note says "the government will assess the degree to which proposed solutions are already operational versus developmental," and you're proposing a tool that's still in beta testing, you need to address that directly. Ignoring the note doesn't make the issue go away. It just means you didn't give the evaluator any mitigation to document.

These notes aren't filler. They're part of the evaluation standard.

Why This Matters in 2026

The federal procurement environment is tightening. Protests are up. Agencies are under increasing scrutiny to document their evaluation decisions. That means the written record matters more than ever.

If your proposal doesn't provide the evaluator with clear, substantiated, and measurable justification for a higher rating, it won't receive one: even if your solution is objectively better. The evaluator can only score what's in the written record.

Section M isn't a formality. It's the standard your proposal will be measured against. Before you submit, the question isn't whether your team thinks the proposal is strong. The question is whether an evaluator, working strictly from Section M, could defend a higher rating based solely on what you've written.

That determination should be clear before submission( not after the debrief.)